As a team focused on improving patient experience, we’ve always paid attention to how and where patients seek education in their disease and treatment journey. With artificial intelligence in the mix, it’s one more element for us to consider as we design educational experiences that help patients feel supported and empowered, rather than misinformed, overwhelmed, or, at worst, cause them to make unsafe healthcare decisions.

In January 2026, ChatGPT and Claude launched beta versions of health-specific large language models (LLMs), where users can connect their electronic health records and health-tracking devices in order to provide more personalized responses to health-related questions.

Using the internet to gather health-related information is not new, however the influx of patients using LLMs over the last few years to answer questions about symptoms and conditions brings a lot of potential risk that users should be mindful of.

With a lens on what it takes to develop trustworthy patient educational materials, we wanted to better understand what it is about using LLMs like ChatGPT that exposes patients to potential danger. Based on our research, here are a few points that we found particularly interesting:

- Misplaced trust: A study published in Nature Magazine last year shows that LLMs can be more interested in being “helpful”, prioritizing emotional validation over providing logical information.

- Prompt-dependency: In order to get a correct course of action or answer medical questions, LLMs require users to ask “the right way.” Neither the patients nor the LLMs have the expertise that HCPs have to sort through symptoms or ask follow-up questions to make sure all the critical information is presented.

- Low accuracy: Another study published in Nature last month found that patients trying to get a diagnosis through an LLM were no more successful than looking things up through a traditional search engine. This can lead to missed high-risk emergencies and potentially dangerous misdiagnoses.

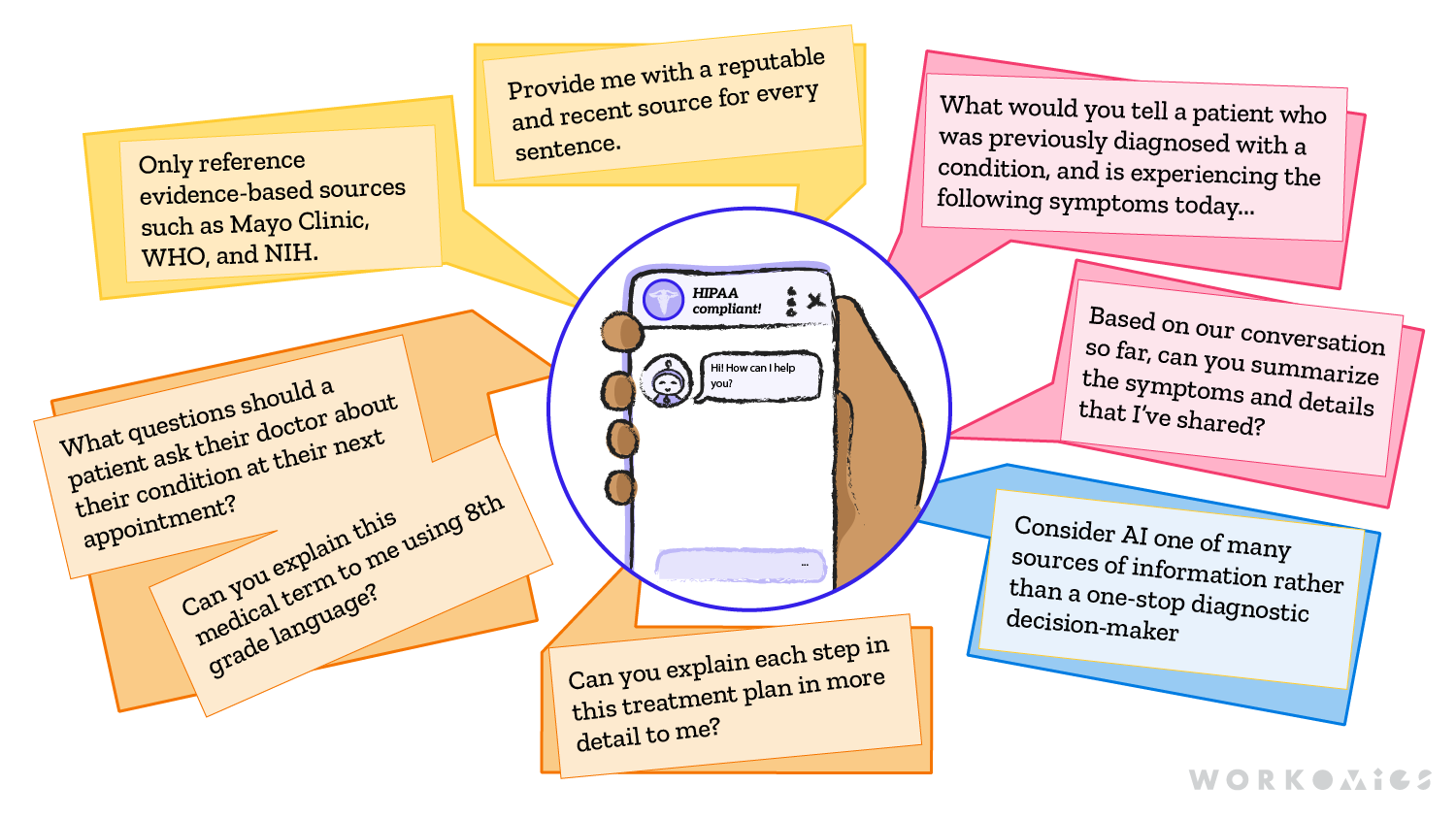

Given these potential harms, what can patients who choose to use LLMs for health-related questions do? Last year, The New York Times published an article providing suggestions for how patients could use LLMs to have helpful, and hopefully less risky, conversations. These include:

- Use LLMs for concrete tasks such as compiling questions to ask HCPs, simplifying medical jargon, or explaining a diagnosis or treatment plan

- Only share relevant personal information, and ask the LLM to play the information back to you during the conversation to ensure it hasn’t hallucinated information

- Look for specific tools that are HIPAA-compliant for secure patient information

- Ask the LLM for sources for the information it is providing

- And most importantly, consider AI one of many sources of information rather than a one-stop diagnostic decision-maker

For all its risks and benefits, patients are turning to LLMs as a channel for information and support. Our hope for patients moving forward is that they make use of critical thinking whenever leveraging these tools in order to become active and informed participants in their care without compromising their safety.

Our other ideas worth exploring

Improving accessibility

An exploration of the four primary principles we follow for improving accessibility of patient resources.

The participation problem: addressing socioeconomic barriers in clinical trials

Examining the structural and economic barriers that impact patient participation in clinical trials, and our approach to the problem.

Women’s representation in clinical research

An examination of the gap in understanding women’s health by looking back at women’s representation in clinical research over time.